Think First

Why the first idea shouldn't come from AI

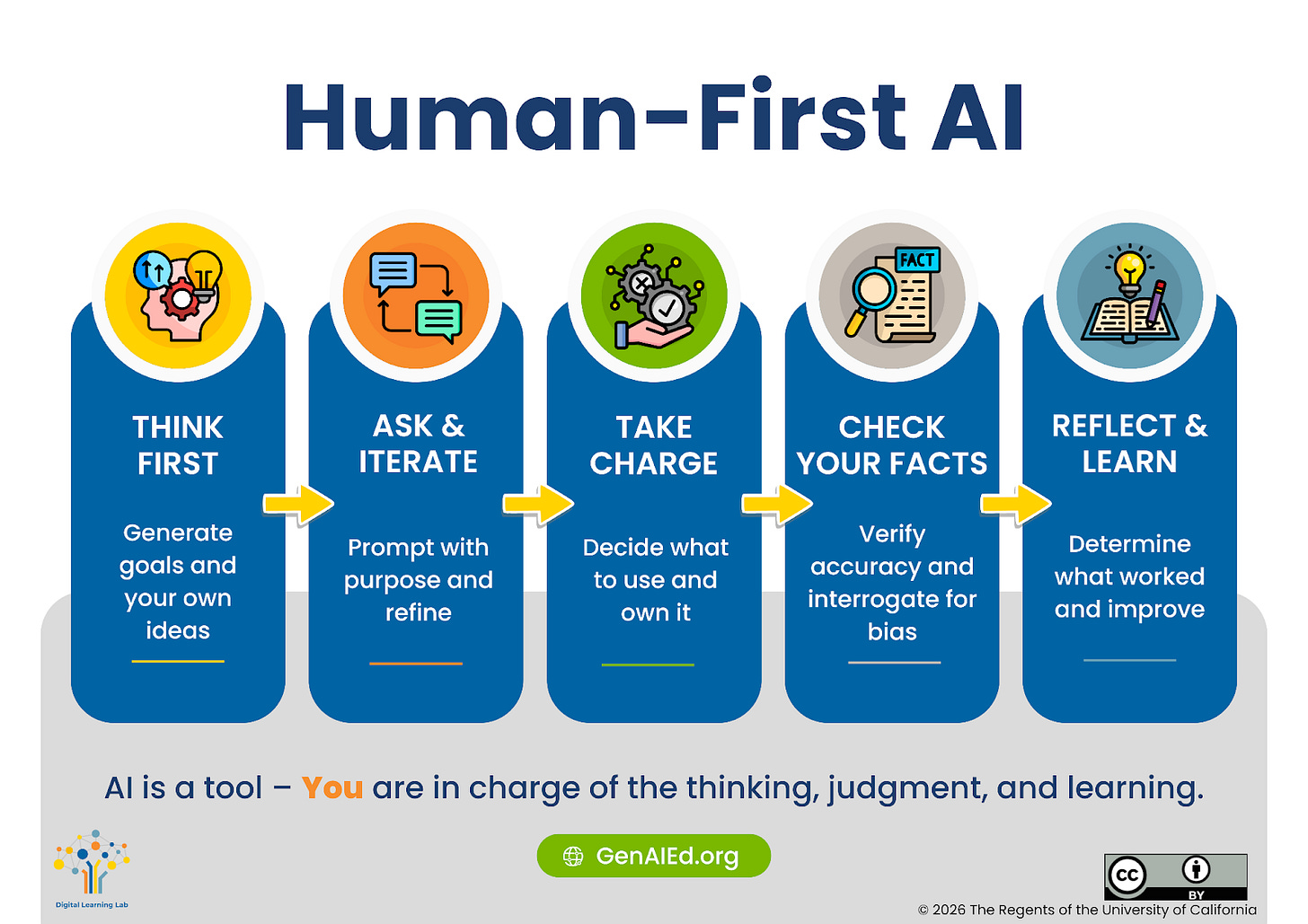

When we first began studying how generative AI might be integrated into writing courses, one of our early recommendations was simple: students should think first before turning to AI (Tate et al., 2025). Two years later, we still emphasize this habit. But our reasons have expanded. What initially seemed like a pedagogical preference turns out to reflect something deeper about how people think, learn, and create.

Your Brain Already Knows More Than the AI

One practical reason to think first is that you already hold a great deal of relevant information in your head that an AI system does not have. When you begin planning or writing, you naturally draw on:

your interests and experiences;

your knowledge of the audience;

contextual details (for example, that your instructor loves purple or sushi); and

skills and knowledge accumulated across courses and life.

Trying to encode all of that into a prompt is nearly impossible. Even when you try, something important will inevitably be missing. This is why working (effectively) with AI typically becomes an iterative prompting process. You generate an output, then realize a key constraint was missing:

Actually, the activity needs to fit into 15 minutes, not three days.

Thinking first helps you bring more of that context into the process before the AI begins shaping the direction.

Once AI Suggests a Path, It’s Hard to Leave It

There are also psychological reasons to start independently. Human attention and decision-making are strongly influenced by the first idea we encounter. Psychologists call this anchoring: early information becomes a reference point that shapes everything that follows. When AI generates an outline, thesis, or example at the beginning of a task, that suggestion becomes the anchor.

Even if students revise the output, they often remain tethered to its structure or framing.

Related effects reinforce this pattern:

Framing effects: the way a problem is initially structured shapes how we interpret it.

Availability bias: ideas that appear first or most easily come to mind dominate our thinking.

Automation bias: people tend to trust suggestions from automated systems more than they should.

Research on design ideation illustrates the consequences. Wadinambiarachchi et al. (2024) found that participants who used an AI image generator during brainstorming produced fewer ideas with less variety and originality than those who brainstormed without AI. The initial example created design fixation. In other words, the first suggestion often determines the path. Writer Meghan O’Rourke describes experiencing this in her own teaching:

Knowing that the tool was there began to interfere with my own thinking. If I asked it to research contemporary poetry for a class, it offered to write a syllabus… If I said yes—to see what it would come up with—the result was different from what I’d do, yet its version lodged unhelpfully in my mind.

For routine tasks this may not matter. But for creative or analytical work, early suggestions can quietly narrow the range of ideas we consider.

AI Is Designed to Agree With You

Another complication is that many AI systems are intentionally agreeable. They hedge, soften disagreement, and present answers in a cooperative tone. Researchers sometimes describe this as sycophancy. This interacts with other cognitive tendencies. Humans already show confirmation bias—we notice and favor information that supports our existing beliefs. When AI responds in ways that reinforce user assumptions, it can amplify that tendency.

AI’s polished writing style introduces another subtle bias: fluency bias. Information that is easy to read and coherent tends to feel more credible or insightful, even when it is not. The result is that AI responses can feel authoritative simply because they are smooth and confident, not because they are correct.

The Quiet Pressure of AI Everywhere

Generative AI tools are increasingly embedded into the software students use every day—word processors, browsers, and PDF readers. They constantly offer suggestions:

Would you like me to summarize this?

Should I generate an outline?

Do you want a handout based on this document?

Behavioral scientists call this the default effect. When a system offers a ready-made option, people are likely to accept it simply because it requires less effort. Humans are also what psychologists call cognitive misers: we naturally conserve mental effort when possible. If a system offers to perform difficult cognitive tasks—summarizing, brainstorming, organizing arguments—it is tempting to let it do the work. But in education, that effort is often where the learning happens.

Writing Is Not Just Communication—It’s Thinking

Perhaps the most important reason to think first is that writing itself is a cognitive process. Political scientist Brian Klaas argues that “language provides the social architecture of our thoughts.” When we struggle to articulate ideas—choosing words, reorganizing sentences, revising arguments—we are not merely expressing thoughts. We are discovering them.

The act of producing language forces us to:

synthesize information;

resolve contradictions; and

clarify what we actually believe.

The words are not the only product. The thinking that occurs while producing them is the real work.

Learning research reinforces this idea through what psychologists call the generation effect: people remember and understand information more deeply when they generate it themselves rather than simply reading it. If AI produces the ideas, students may miss the cognitive benefits that come from producing them.

The Value of the Messy Middle

Anyone who writes regularly knows that the process is rarely smooth. O’Rourke describes writing as a journey through uncertainty:

When I write, the process is full of risk, error and painstaking self-correction. It arrives somewhere surprising only when I’ve stayed in uncertainty long enough to find out what I had initially failed to understand.

This difficult “messy middle” is where much of the learning occurs. But AI systems are designed to skip directly to polished outputs, bypassing the struggle that helps ideas develop. Students may not immediately appreciate the value of that effort. Yet alumni often look back on difficult writing assignments as experiences that shaped how they think.

As Jonathan Alexander writes:

Students’ experiences of writing while they are on campus should be as rich and robust as possible… They carry those experiences not just into the workforce but into their personal and civic lives.

Even assignments students once disliked often become meaningful later—because they taught them that writing is not only communication, but also a way of thinking.

Why Thinking First Matters

Thinking before turning to AI accomplishes several things:

It activates the knowledge and context already in your mind.

It prevents early AI suggestions from anchoring your thinking.

It preserves the cognitive benefits of writing and problem solving.

It helps you deliberately decide which tasks to offload and which require human effort.

That last point may be the most important. Students need to develop the metacognitive skill of deciding when AI should help and when it should not—and educators need to help them build that judgment.

Much of the excitement around AI focuses on efficiency: faster writing, faster summaries, faster everything. But thinking—especially good thinking—takes time. Ideas need space to percolate. AI can assist with thinking. But if we turn to it too quickly, it may quietly shape the direction of our thoughts before we have had the chance to think for ourselves. That is why we--and our students--need to build a durable habit:

Think first. Then decide if and how AI should help.

Alexander, J. (2023, Nov.). Students’ right to write. Inside Higher Ed. https://www.insidehighered.com/opinion/views/2023/11/22/students-have-right-write-ai-era-opinion

Klaas, B. (2025, Jun). The death of the student essay--and the future of cognition. The Garden of Forking Paths. https://www.forkingpaths.co/p/the-death-of-the-student-essayand?r=7eo0n&utm_medium=ios&triedRedirect=true

O’Rourke, M. (2025). I Teach Creative Writing. This Is What AI Is Doing to Students. International New York Times, https://www.nytimes.com/2025/07/18/opinion/ai-chatgpt-school.html?smid=nytcore-ios-share&referringSource=articleShare

Tate, T. P., Harnick-Shapiro, B., Ritchie, D. R., Tseng, W., Dennin, M., & Warschauer, M. (2025). Incorporating generative AI into a writing-intensive undergraduate course without off-loading learning. Discover Computing. https://doi.org/10.1007/s10791-025-09563-9

Wadinambiarachchi, S., Kelly, R. M., Pareek, S., Zhou, Q., & Velloso, E. (2024, May). The effects of generative ai on design fixation and divergent thinking. In Proceedings of the 2024 CHI Conference on Human Factors in Computing Systems (pp. 1-18).